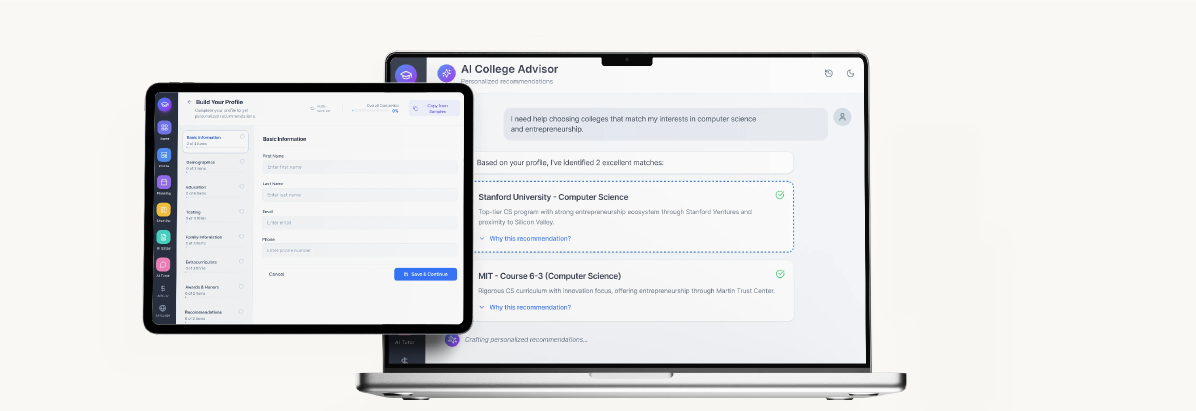

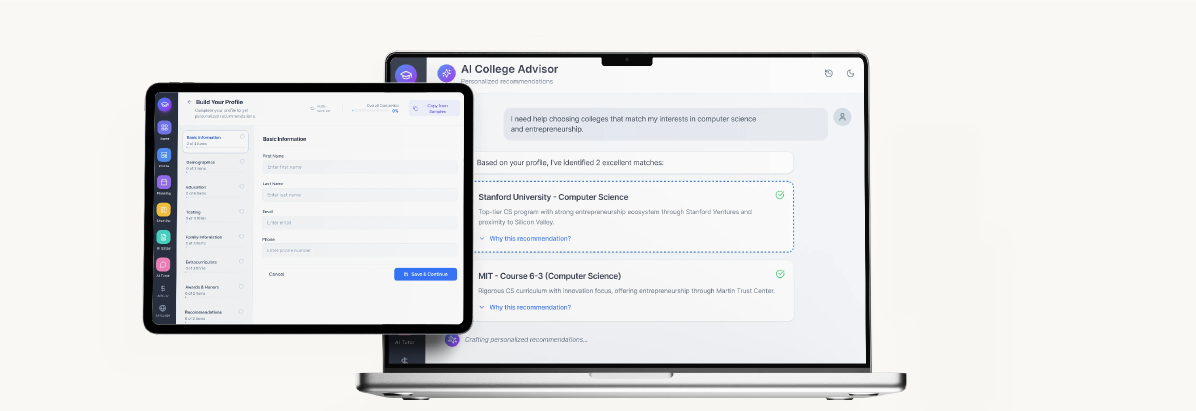

DreamCollege AI — platform across devices

Building trust in AI-powered college counseling — from blank Figma file to 150,000 students in 4 months.

DreamCollege AI — platform across devices

Outcomes

I appreciate you taking the time to review this case study. I chose this example because it demonstrates three things: shipping fast on a 0→1, high-visibility product; identifying and optimizing a key friction issue using real data; and influencing cross-functional roadmap decisions through user research.

Headquarters

Frisco, Texas

Founded

2023

Industry

SaaS · EdTech

Company Size

11–50

Skills

UX Research · Systems Thinking · Prototyping · IA · Usability Testing · Localisation

Platform

Web · Mobile · Conversational AI

When I joined DreamCollege AI at the ideation phase, the college admissions landscape was facing a critical trust deficit. Students and families were making life-changing decisions worth hundreds of thousands of dollars — yet they were hesitant to trust AI with guidance that could shape their futures.

Key problem areas identified in discovery

Conversational AI as the driver of all four outcome areas

I led end-to-end design from 0→1, translating ambiguous AI-heavy business and user requirements into clear, user-centered experiences across: conversational AI interfaces, multi-step onboarding, payment flows, and core product features.

Research scope — 75 total sessions across 4 methods

Research touchpoints breakdown (n=75)

Through 35+ student interviews and 12 parent/counselor sessions, three patterns kept surfacing. Naming them gave the whole team a shared framework for every design decision that followed.

"It's like asking for directions from someone wearing a blindfold — even if they're right, I can't trust it."

— High school student, discovery interviewDiscovery and design ran in parallel due to the 4-month runway. Competitive analysis of 8 platforms, journey mapping across 6 personas, and heuristic evaluation of AI chatbots in education informed every decision.

Project timeline — 12 weeks, 6 phases

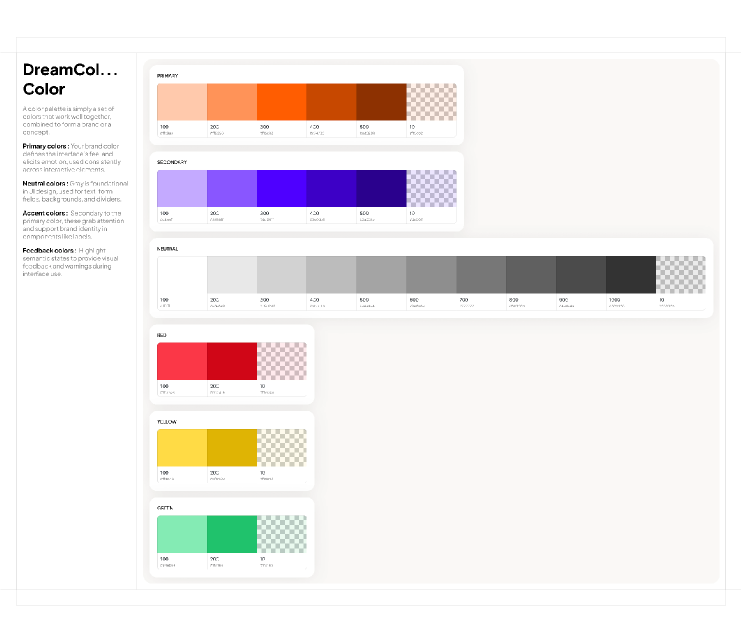

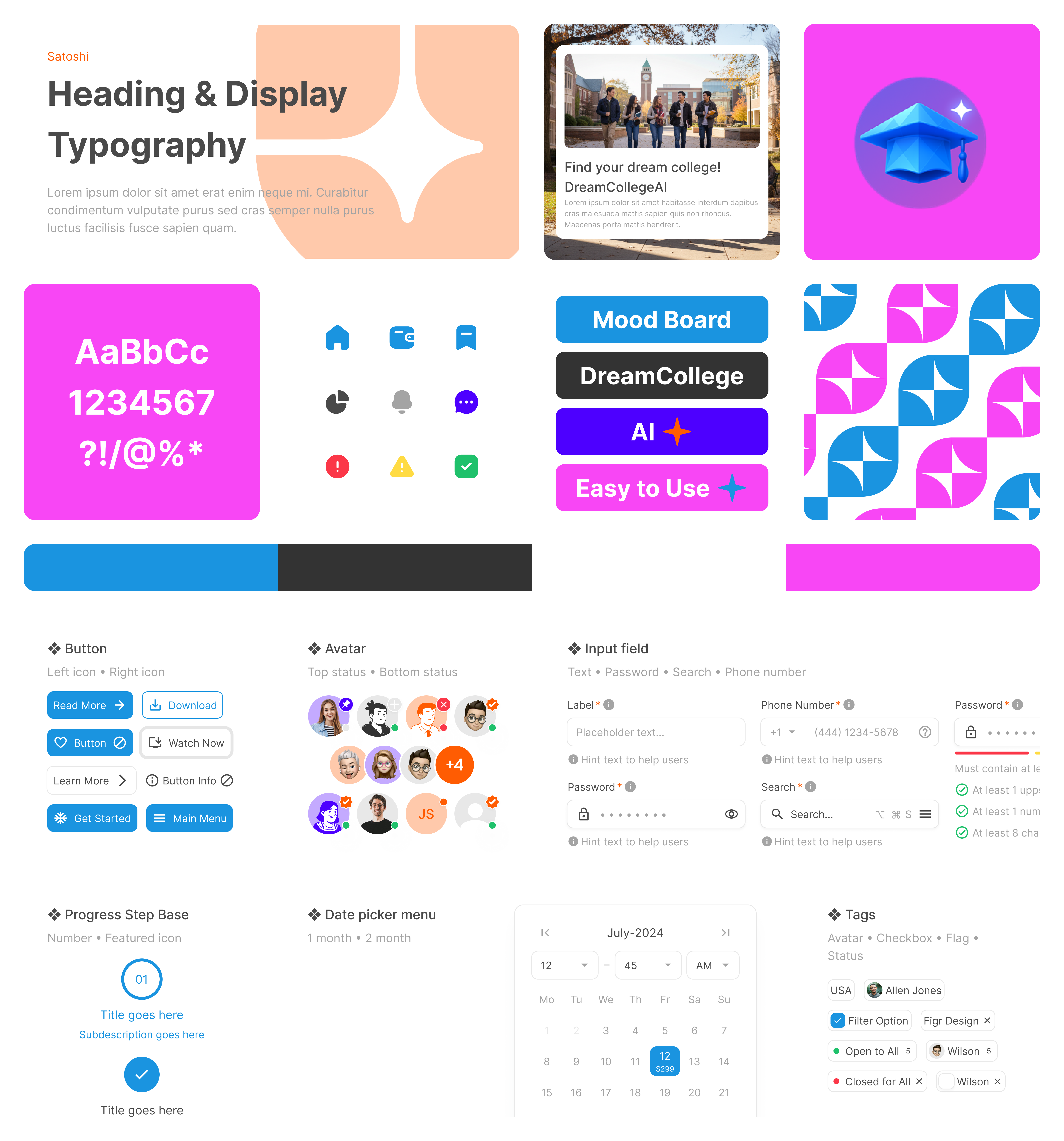

Working closely with the CEO and branding stakeholders, I developed a visual language balancing approachability with credibility. A gradient-heavy palette (blues → purples) conveyed innovation while differentiating us from corporate competitors.

Color system — primary, accent, neutral, semantic

Design system overview — typography, components, screens

One of the biggest UX challenges: keeping users engaged while the LLM generated responses (3–8 second delays). Initial testing showed 31% of users clicked away during loading, reporting they felt "ignored" or unsure if the system was working.

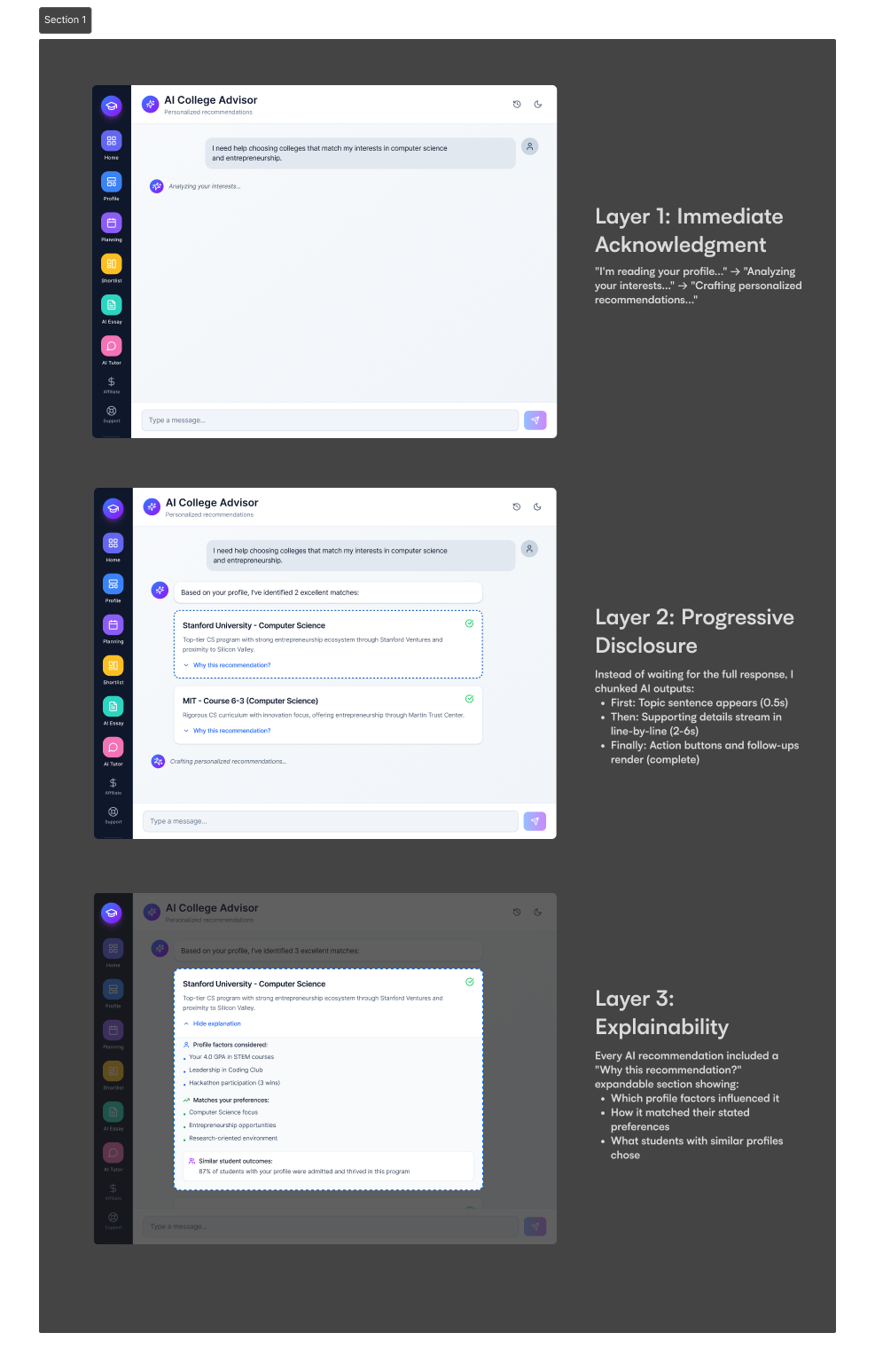

I designed a three-layer trust system: immediate acknowledgment (streaming animation), progressive disclosure (structured response format), and explainability (inline "why" callouts for every recommendation).

Three-layer AI transparency model

Drop-off −77% · Follow-up AI engagement +63%

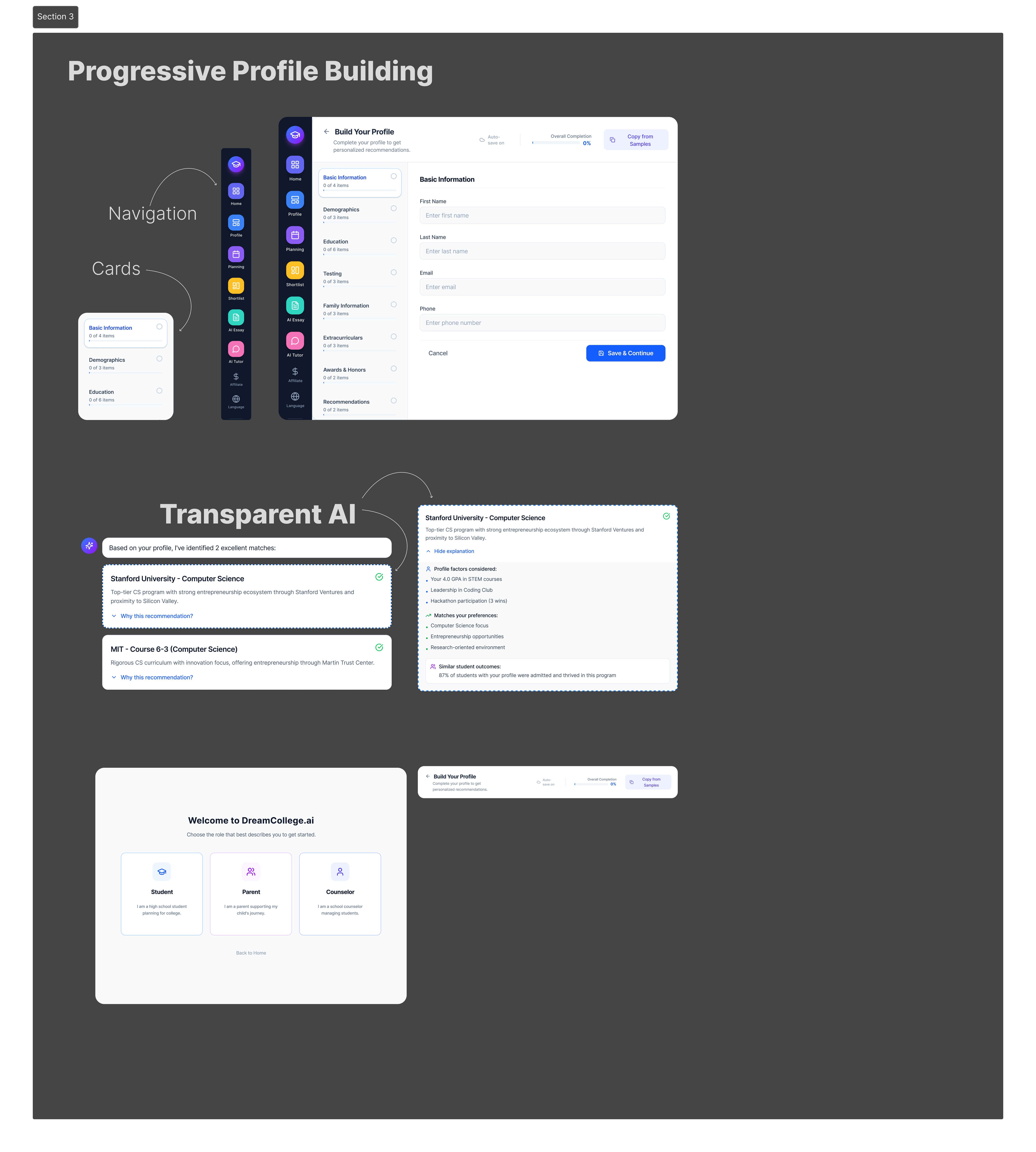

Progressive profile building + transparent AI in production

Decision 1: Profile-First Onboarding · Decision 2: Copy Profile from Sample · Decision 3: Collapsible Navigation

Hotjar Heatmaps revealed that users were clicking on non-interactive elements (icons, headings) expecting them to be buttons. I redesigned these as genuine interactive elements or removed them to reduce confusion.

Tobii Eye-Tracking (12 sessions) discovered users were skipping important context text styled too similarly to body copy. I introduced icon badges, colored callouts, and larger font sizes for key decision points.

The CEO wanted to prioritize monetization features (payment, upgrades) while research clearly showed we needed to nail core trust and usability first. I created a "Trust-First Roadmap" presentation showing user research quotes, conversion funnel data with a 47% drop-off before payment, and a proposed sequence: fix core experience → build trust → introduce premium features.

Outcome: CEO agreed to delay payment v2 by 2 weeks to focus on profile UX improvements. This resulted in 22% higher conversion when payment was eventually released.

AI products introduce design challenges that traditional UX patterns don't solve: responses change as the model learns, user control must be preserved, and a user base spanning 30+ countries created unexpected localization complexity.

AI design challenges — surface vs. depth

DreamCollege didn't start with a blank canvas. The PM had a product direction — features, flow, even some solutions already sketched. I was brought in to make that direction feel right for 150K+ students navigating one of the most stressful decisions of their lives. That's a different design problem: you're not inventing, you're validating, shaping, and sometimes pushing back — fast.

Every project runs on the time–scope–quality triangle, and you only ever get two. Showcase deadlines were fixed. Feature scope was PM-defined. So quality became the conscious dial — deciding which surfaces got polished and which shipped at 80%, and being deliberate about that call every sprint.

"V1 doesn't need to be perfect — it needs to be shippable. Perfection is what V2 is for."

What I'd Do Differently

What I'm Proud Of

Weekly Design-Dev Sync

Design Handoff Process

Conclusion

DreamCollege AI taught me that great design isn't about making things pretty — it's about building trust when stakes are high, simplifying complexity without losing depth, and creating systems that scale.

In 4 months, we went from a blank Figma file to a platform serving 150,000 students making one of the biggest decisions of their lives. The real achievement was earning their trust.

Glad we could cross paths.

Out of anywhere you could be, you're here.