DreamCollege AI

Project: DreamCollege AI: Building Trust in AI-Powered College Counseling

Description: AI-powered SaaS platform for college admissions counseling

Org: DreamCollege

Team: Cross-functional collaboration with 4 developers, 4 UX designers, CEO, CFO, and key stakeholders

Timeline: 4 months

Role: Lead Designer (0-1)

(Research → IA → UX → UI → Prototyping → Testing)

The Challenge

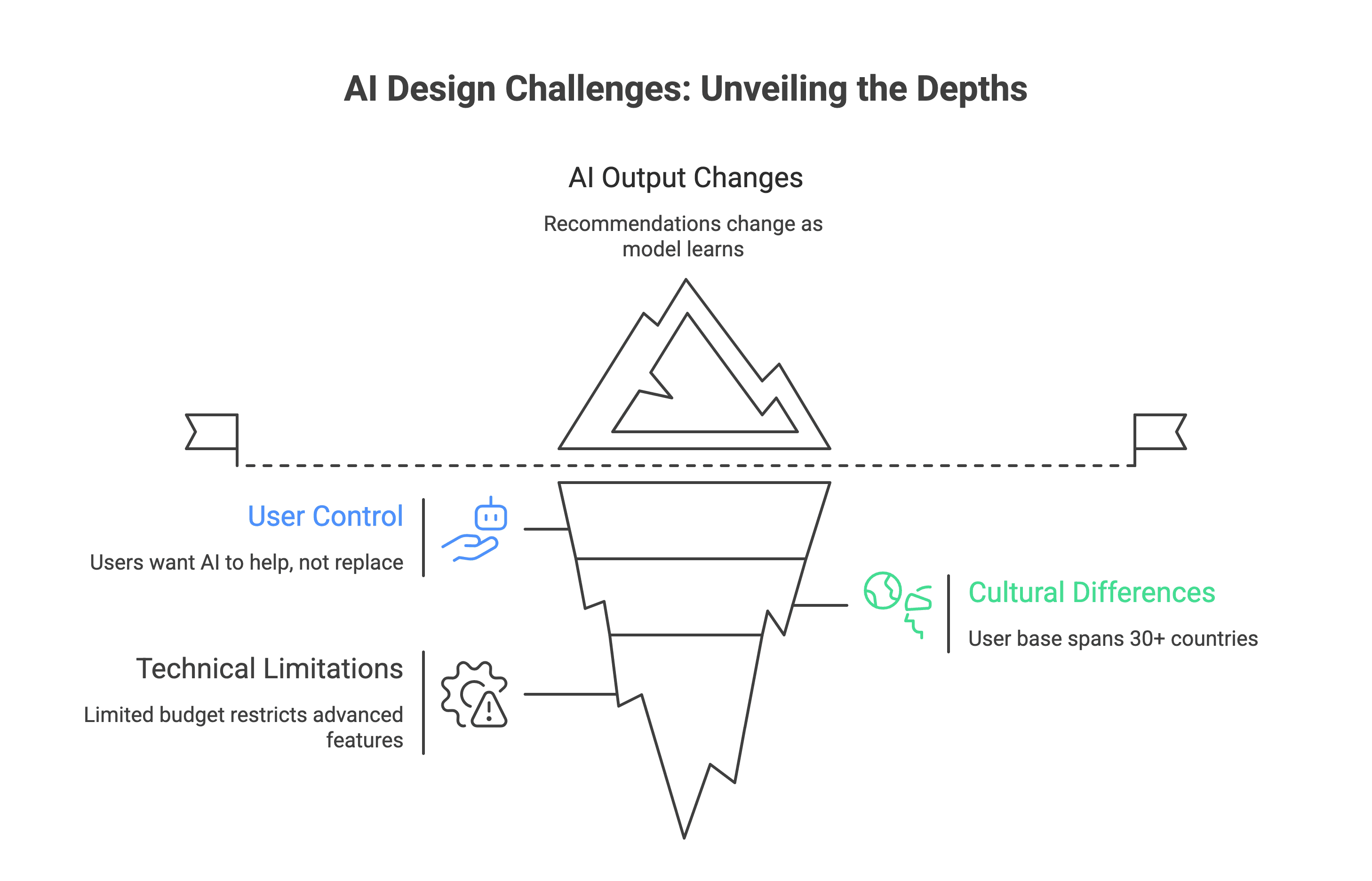

The Problem Space: When I joined DreamCollege AI at the ideation phase, the college admissions landscape was facing a critical trust deficit. Students and families were making life-changing decisions worth hundreds of thousands of dollars, yet they were hesitant to trust AI with guidance that could shape their futures.

Core Challenge: How might we design an AI-powered college counseling platform that feels as trustworthy, personal, and reliable as working with a human counselor while serving users at scale?

Solution

How might we design an AI-powered college counseling platform that feels as trustworthy, personal, and reliable as working with a human counselor while serving users at scale?

Why This Mattered ?

High stakes: College decisions represent $591.1 billion in 2025 in the US

Trust barrier: 73% of prospective users expressed skepticism about AI making recommendations for major life decisions

Market gap: Traditional counselors serve 1-2 dozen students; we needed to serve thousands while maintaining quality

Timing pressure: Limited 4-month runway to launch with constrained budget and talent pool

My Role & Impact

What I Did

Led end-to-end design from 0→1, translating ambiguous AI and Data heavy business and user requirements into clear, user-centered experiences across:

Conversational AI interfaces (chatbot, AI tutor)

Multi-step onboarding and profile building (Hub and spoke)

Payment flows and subscription management

Core product features (college matching, essay writing, activity exploration)

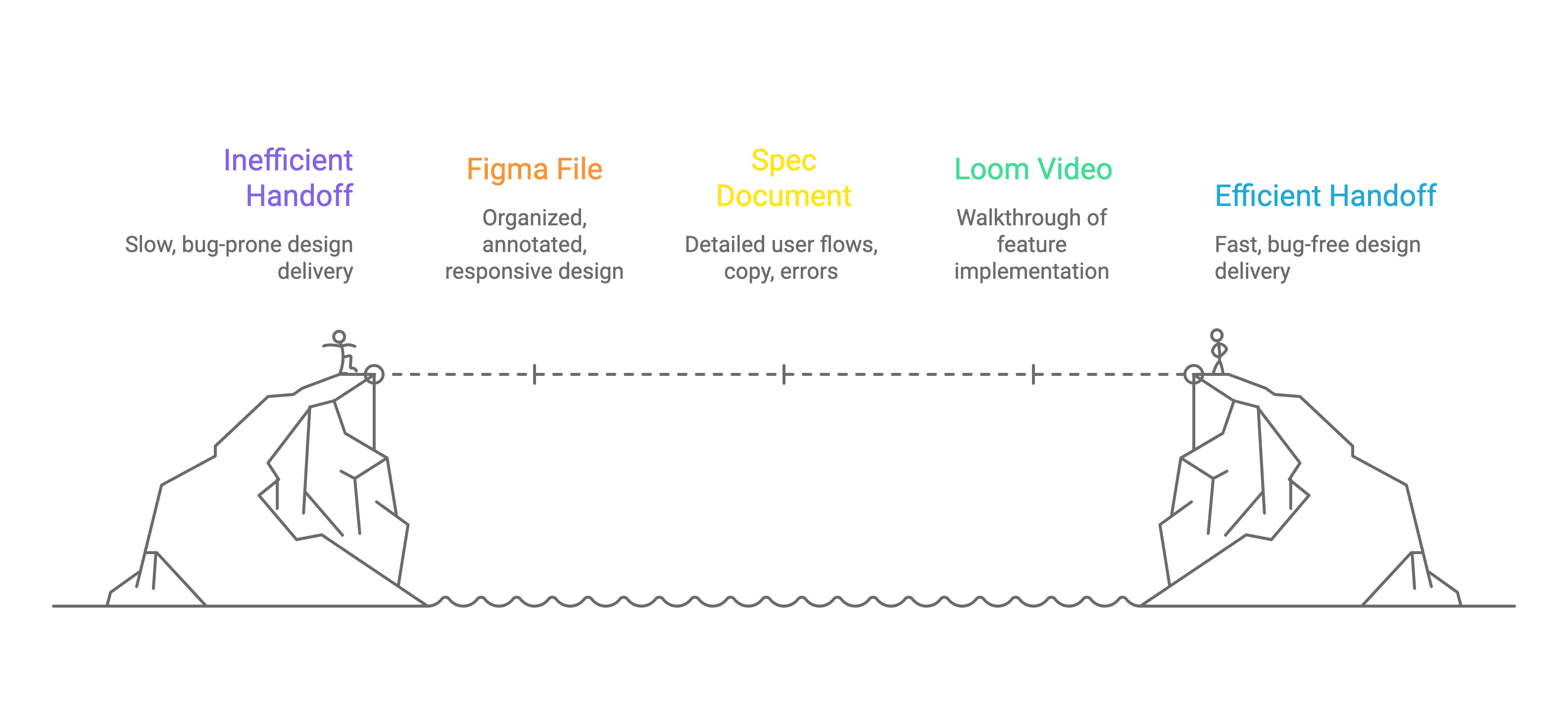

Established design foundation:

Defining the product's visual language and design system

Creating design tokens and naming conventions for seamless design-to-dev handoff

Building scalable component libraries that reduced design-to-development time

Art directing all user-facing touchpoints to ensure consistency and emotional trust

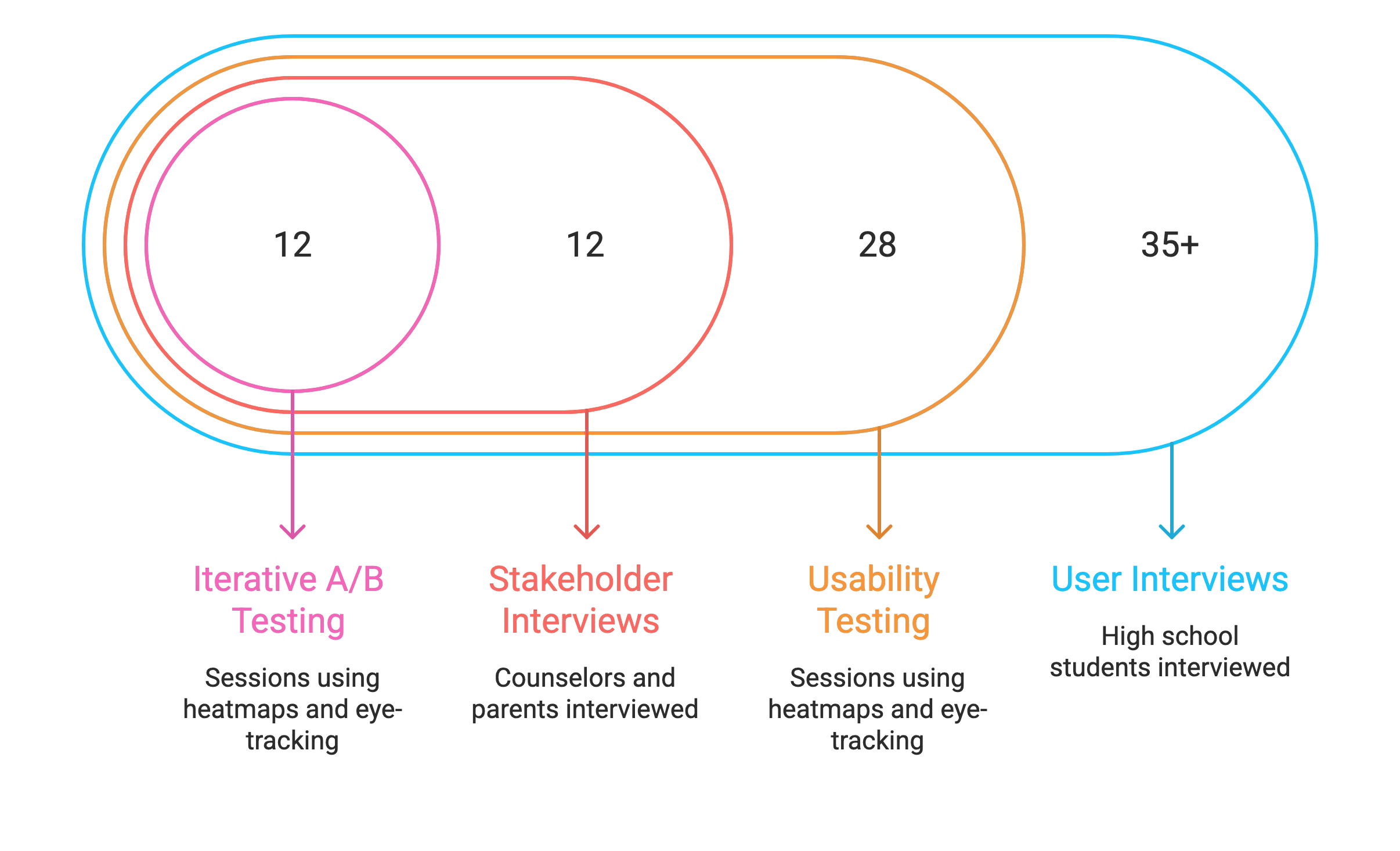

Drove research:

35+ user interviews with high school students (grades 9-12)

28 usability testing sessions using Hotjar heatmaps and Tobii eye-tracking

12 stakeholder interviews with counselors and parents

Iterative A/B testing on critical conversion points

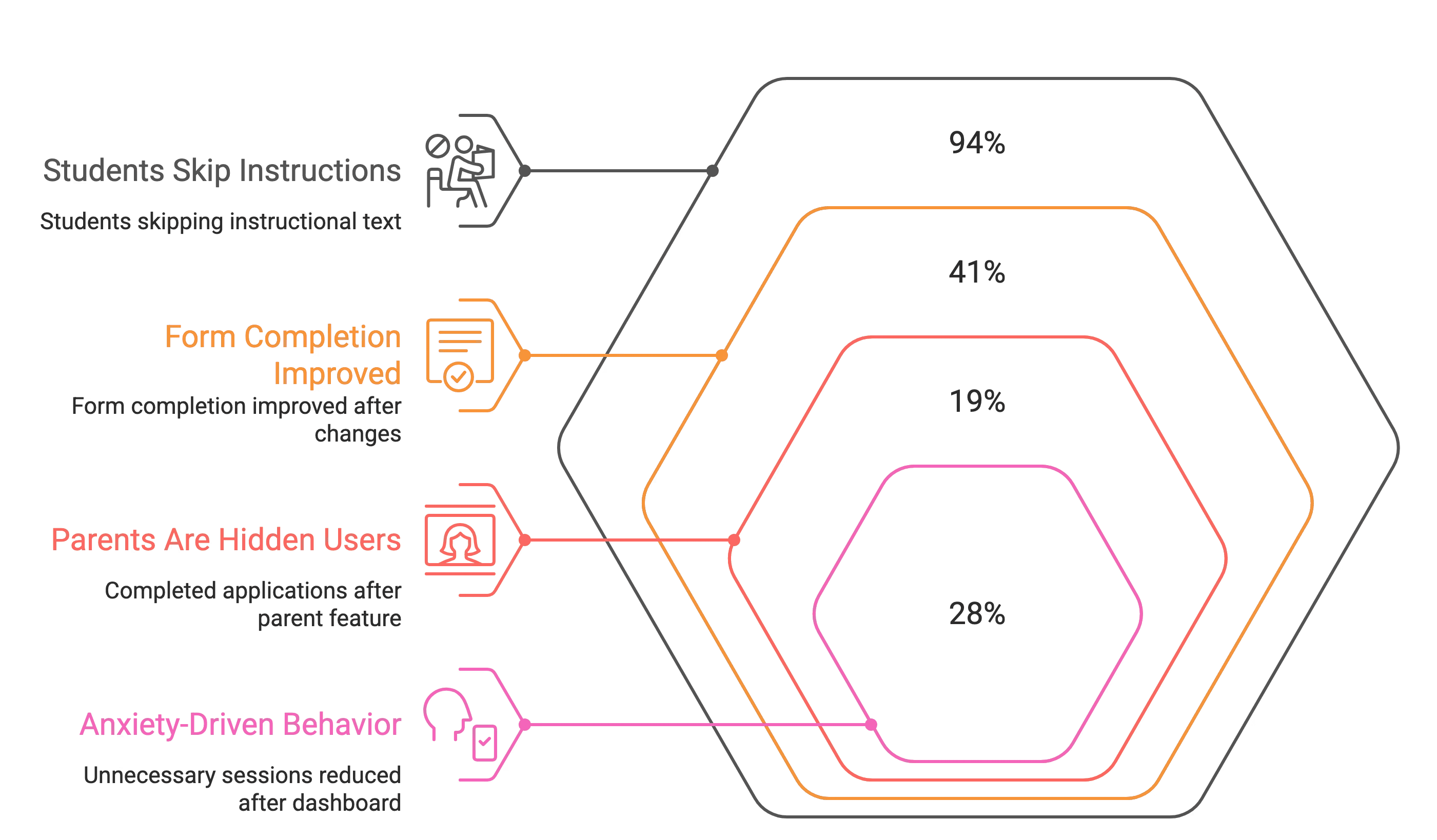

Key Problems

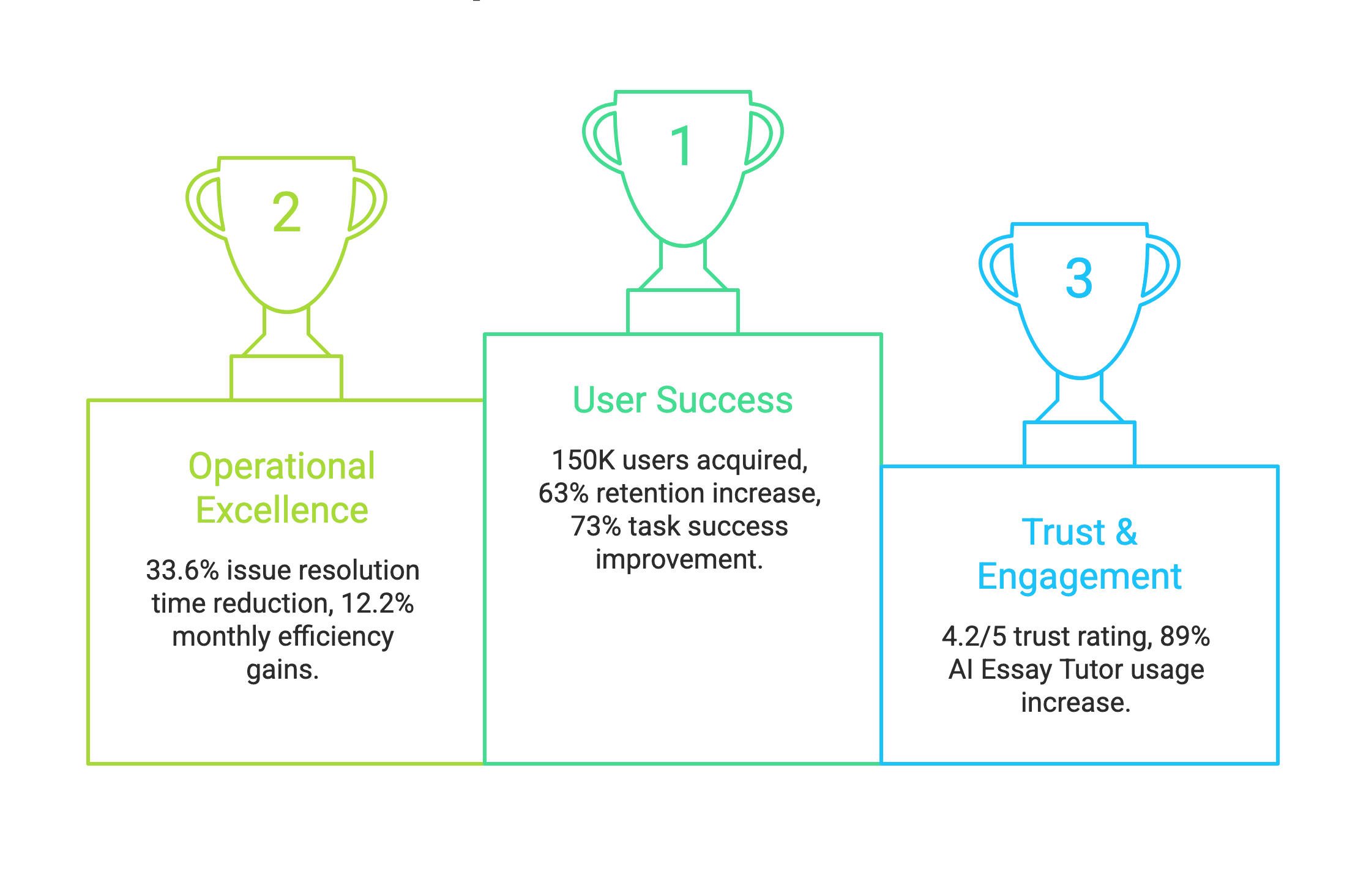

The Results

User Growth:

Scaled from 0 to 150K+ users in 4 months

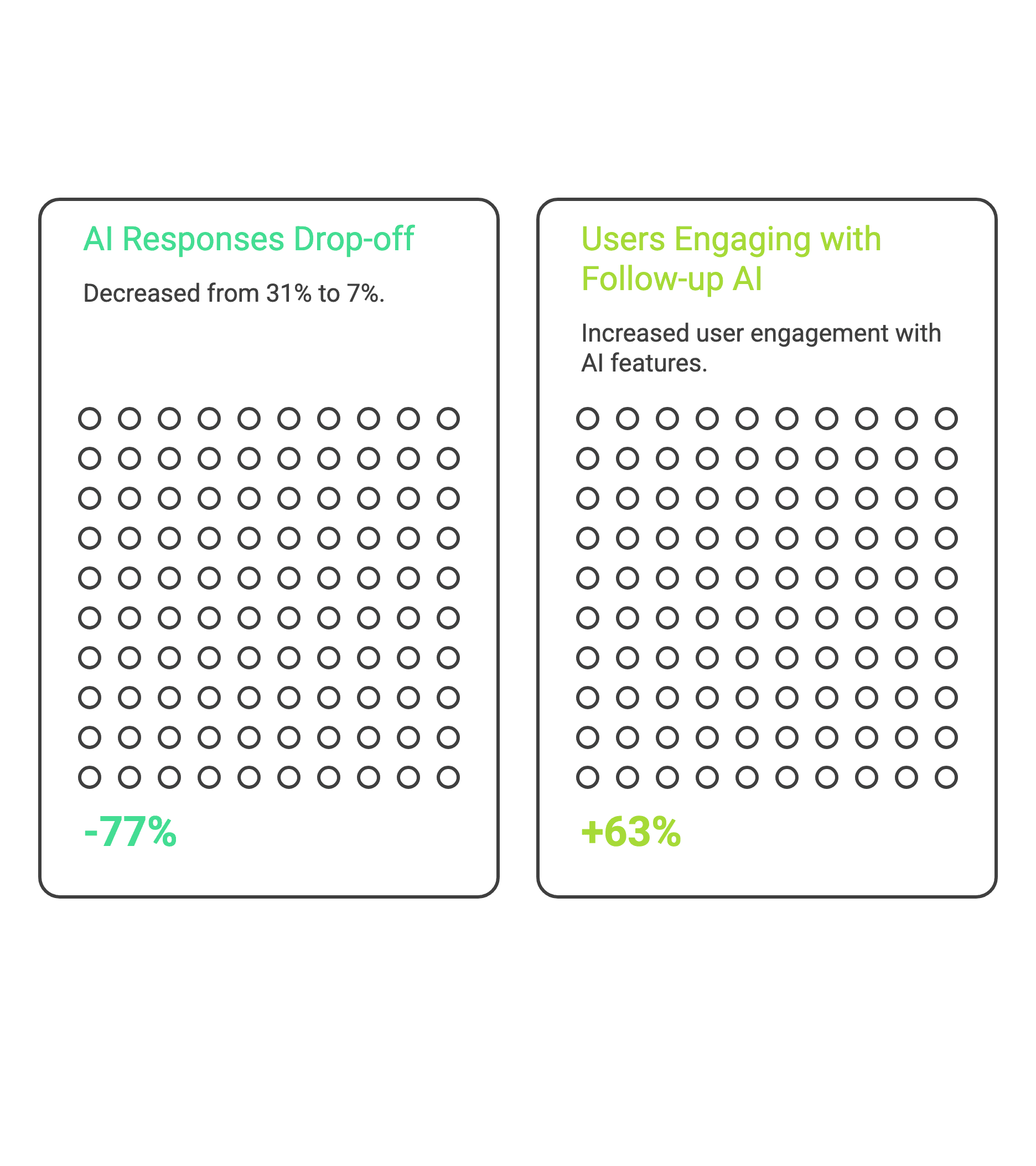

63% increase in user retention through conversational AI improvements

Operational Efficiency:

Reduced issue resolution time by 33.6% in month one, then 12.2% month-over-month

Improved task success rate by 73% through simplified workflows and clear visual affordances

Engagement Metrics:

Boosted average session duration by 40% through responsive, trust-building interactions

Decreased task completion time by 52% (from 41% time-on-task to 19%) via streamlined information architecture

Team Efficiency:

Cut design-to-development handoff time by 45% through systematic design tokens and documentation

The Process

Research Methods:

Primarily we conducted competitive analysis of 8 college counseling platforms (Common App, Naviance, CollegeVine, traditional counseling services)

Journey mapping across 6 user personas (varying by grade level, academic achievement, socioeconomic background)

Heuristic evaluation of existing AI chatbots in education (Khan Academy, Duolingo, Quizlet)

1. Discovery & Research (Week 1-2)

Understanding the Trust Problem

I began by immersing myself in the problem space. Through interviews with 35+ students and 12 parents/counselors, I identified three critical trust barriers:

Key Insight 1: "Black Box" Anxiety

Users felt uncomfortable when they couldn't see "what the AI was thinking." One student said: "It's like asking for directions from someone wearing a blindfold—even if they're right, I can't trust it."

Key Insight 2: The Comparison Trap

Students weren't comparing us to other AI tools—they were comparing us to their mental model of a "good counselor": someone who knows them deeply, explains their reasoning, and adapts to their unique situation.

Key Insight 3: Emotional Stakes ≠ Logical Stakes

Even when users logically understood AI capabilities, their emotional response was skepticism. This wasn't about features—it was about feeling safe with a high-stakes decision.

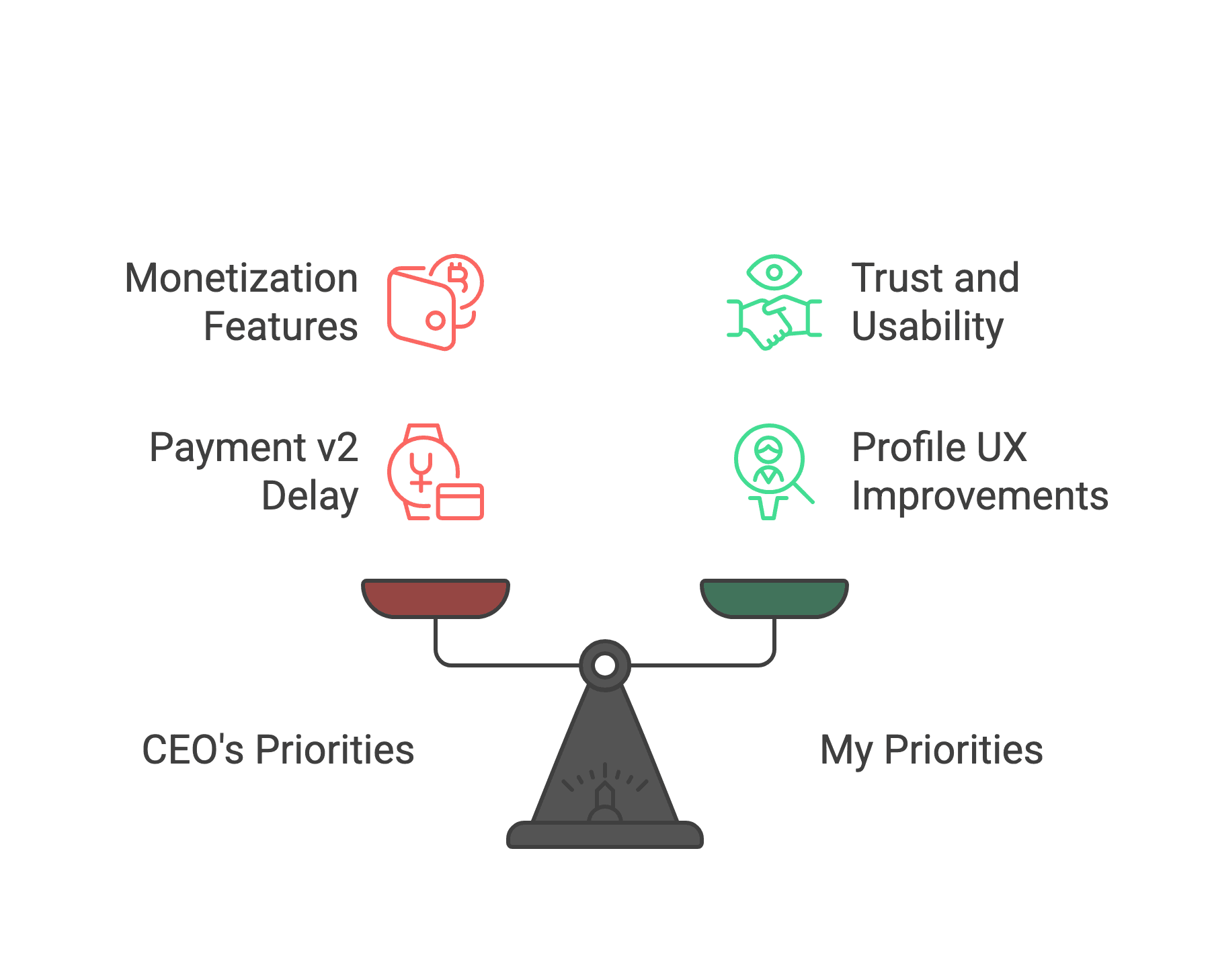

2. Problem Framing & Strategy (Week 2-3)

Reflections

What I'd Do Differently

Earlier User Testing

I wish I'd conducted usability tests in Week 2 rather than Week 5. We could have caught navigation issues sooner and saved 2 weeks of iteration.

What I'm Proud Of

1. Solving the Trust Problem

We didn't just build a tool, we built a relationship. Users feel confident making life-changing decisions because the AI earns trust through transparency.

2. Design System That Scales

The system I built isn't just serving 150K users today, it's architected to handle 10x growth without major refactoring.

3. Cross-Functional Leadership

I bridged design, engineering, and business, translating user needs into technical specs and business value. This skill will define my career.

Conclusion

DreamCollege AI taught me that great design isn't about making things pretty—it's about building trust when stakes are high, simplifying complexity without losing depth, and creating systems that scale.

In 4 months, we went from a blank Figma file to a platform serving 150,000 students making one of the biggest decisions of their lives. The visual design, conversational AI, and design system I built became the foundation for that growth—but the real achievement was earning users' trust.

This project proved that with clear principles, relentless user focus, and collaborative leadership, a designer can drive not just pixels, but business impact and meaningful outcomes for real people.